Contributions

Github Source code : HERE

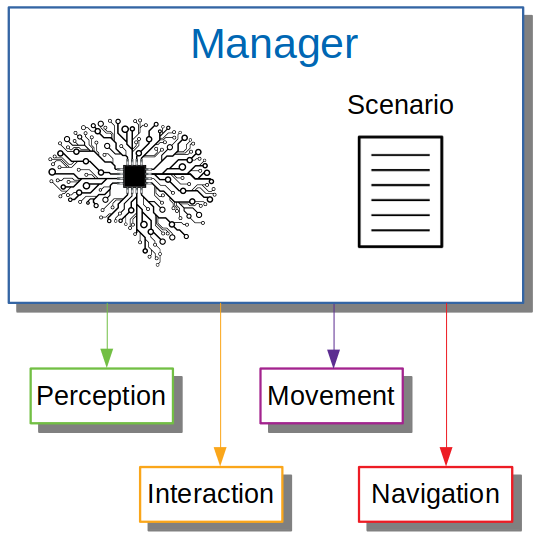

I. Overview of Our Architecture

Our architecture is built upon 5 ROS modules : Reasoning, Perception, Navigation, Movement and Interaction.

II. Main Contributions

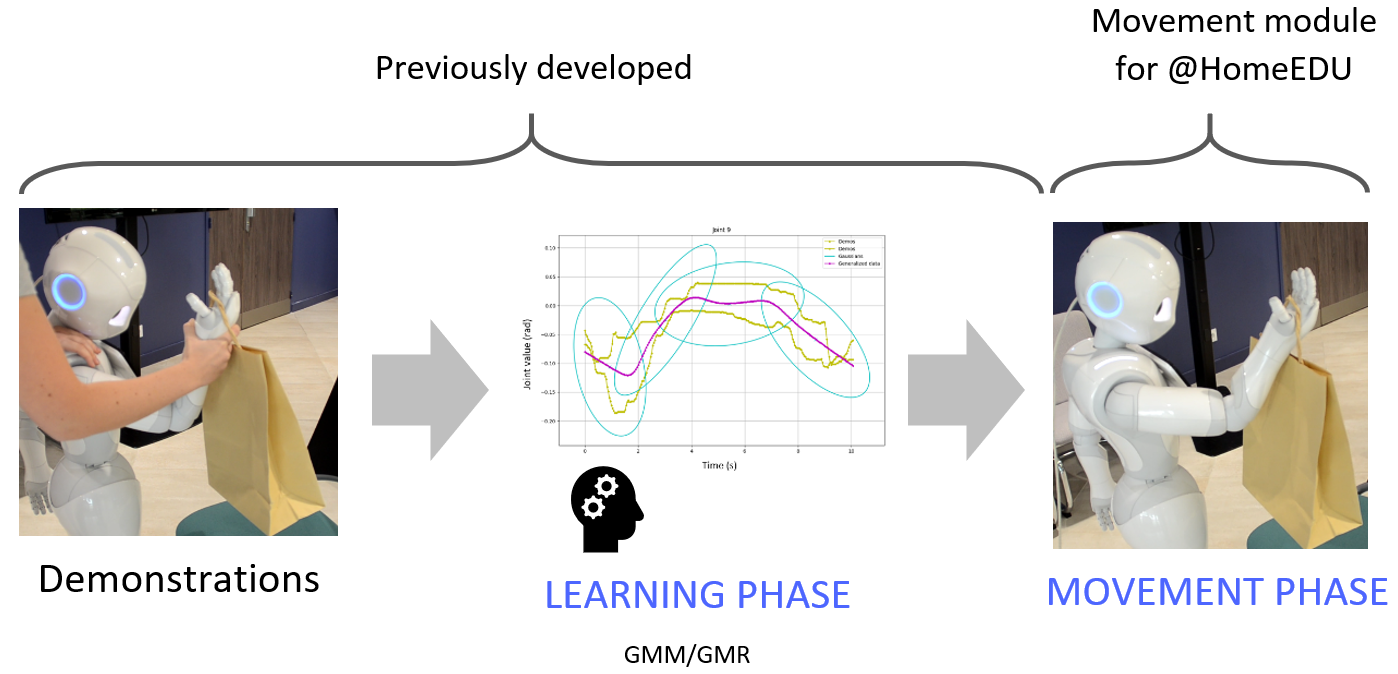

1. Movement Learning From Demonstrations

After developing a generic architecture which reproduces a movement, we need to learn a movement from multiple demonstrations. The chosen model is the combination of GMM (Gaussian Mixture Model) and GMR (Gaussian Mixture Regression). GMM generalizes the movement with Gaussians. Then, GMR regenerates the movement using the GMM output. Each demonstration and generated movement are saved in their respective files. Thus, the user can make demonstrations of a movement, which he has chosen the name, then starts the learning. When the user chooses to start the learned movement, the movement will work in real-time because the learning was done before. The Pepper and Nao robot were tested on simulation using SoftBank Robotics' QiBullet simulator.

More information about this project

Example video

2. Human-Robot Interaction

2.1. NLP

RoboBreizh decided to take advantage of latest advances in NLP with the use of BERT for Joint-Slot Filling. Joint-Slot Filling is a task that aims at classifying utterances in intent class and then fill related slots as arguments. Our contribution is a newly generated dataset that supports the annotation format of the Joint-Slot Filling task as well as the fine-tuning of a JointBERT model, focusing on pronoun disambiguation in the GPSR task.

2.2. Speaker Recognition

We provide a solution that recognize a speaker voice by learning, no matter the speaking language. The proposed model exploits SincNet, which requires as learning parameters only lower and higher cut frequencies, and therefore reduces the number of parameters learned per each filter and makes this number of parameters independent of the range. In addition, we combined both the Sinc Net and a Siamese Network with an algorithm to train Siamese neural networks in speaker identification.

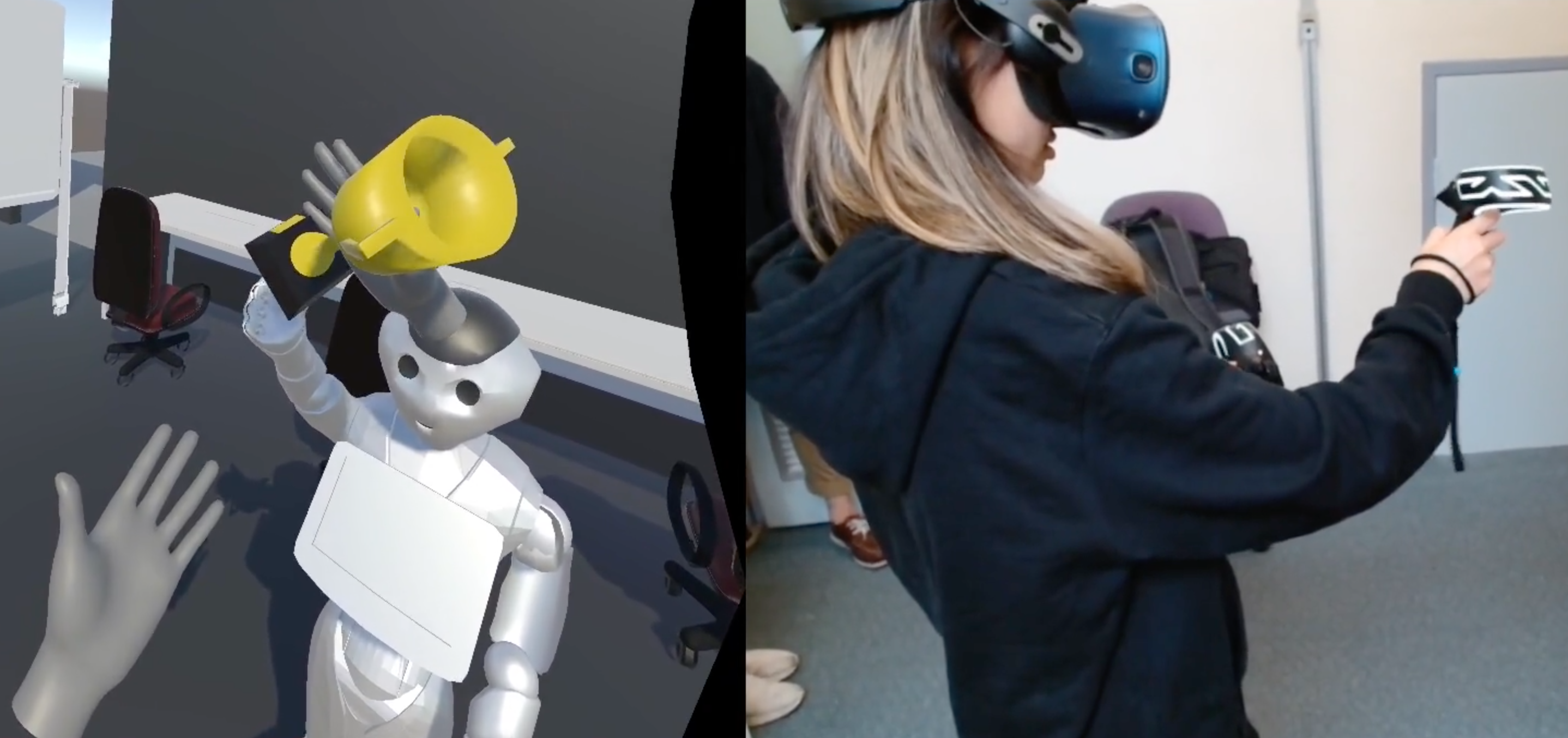

2.3. Virtual reality interactions

RoboBreizh develops new VR system. The idea is to provide safety while testing new solutions. In addition the users can interact remotely with the virtual robot.

III. Others

1. Reasoning

The robot reasoning (manager) is a high level structure. Modules output are stored as object instance and available to be used as an input information later. The manager executes tasks in the required order, scheduling Pepper behavior. It also handles task priority. This architecture was designed to easily add new behaviors. A new behavior can be implemented without editing any external modules. In order to bypass execution lags, orders are canceled if they take too long to execute.

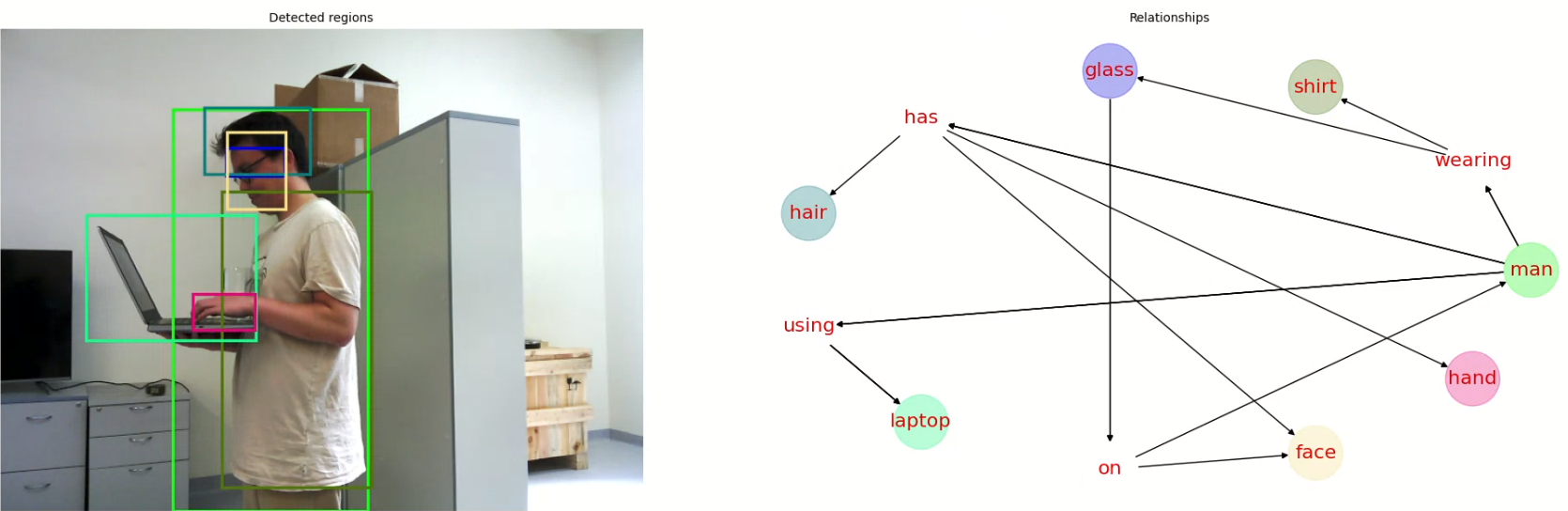

2. Perception

The perception offers a collection of ROS services with options, including a distance filter to prevent objects from being detected outside of the arena. A home-made combination of pre-trained Single Shot Detector (SSD) with Inception and MobileNet is used to detect all types of objects in the arena. We also use recent works on Visual Relationships Detection (VRD) to infer relationships between entities in the scene, in an end-to-end manner. A CNN-based model predicts human joint locations of multiple persons from an RGB image.

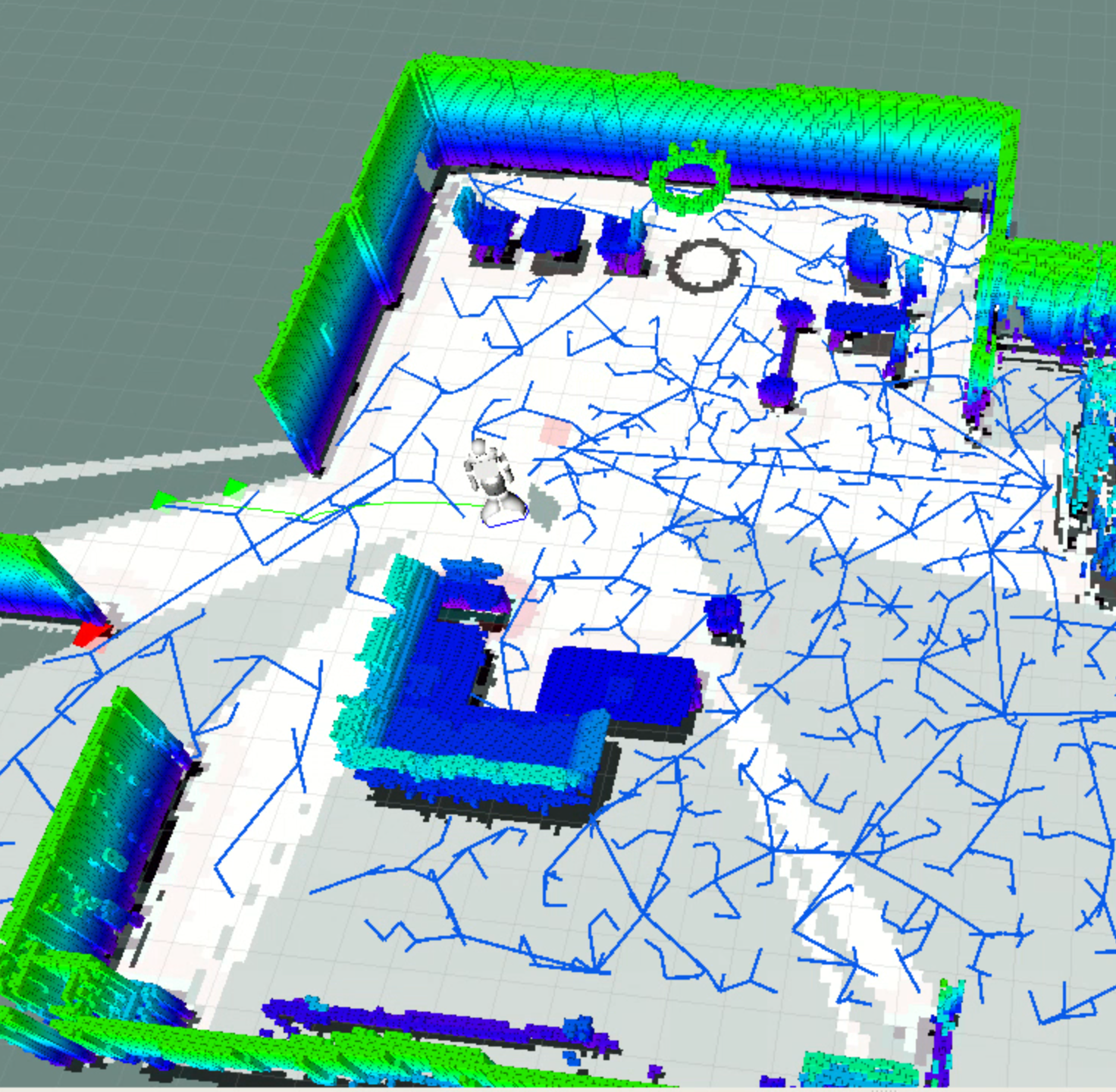

3. Navigation

A new generation of map should be able to detect objects at height to avoid collisions. Using Visual-SLAM, Octomap determines a probability of obstacle presence from a point cloud and generates a voxel, visualization in Rviz. First, it is necessary to convert the image of the depth camera into a point cloud and then pass the different arguments in parameter of the octomap_server node. A projection from map 3D to map 2D is done. It is relevant to use octomap' filters (notably height level filter) in order to avoid projecting lamps, beams or even the floor itself on a 2D map. RoboBreizh is able to use Octomap or Rtabmap, depending on the characteristics of the environment to map. RoboBreizh use a new dynamic local planner inside the ROS Navigation Stack to avoid moving obstacles.

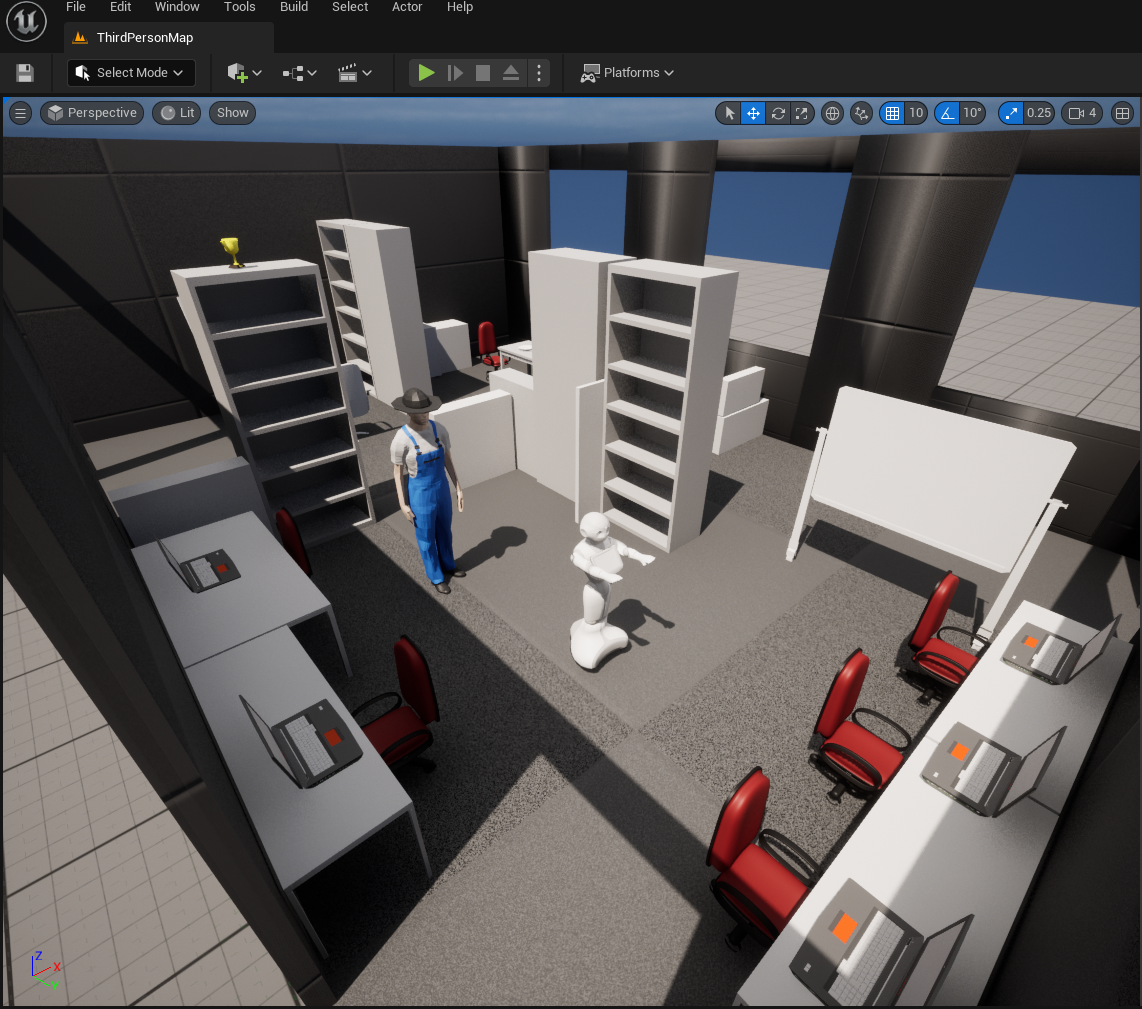

4. Simulation

All our code is working with Gazebo, QiBullet, Unity 2021 and Unreal 5. Such photo realistic engines will help to train machine learning algorithms.

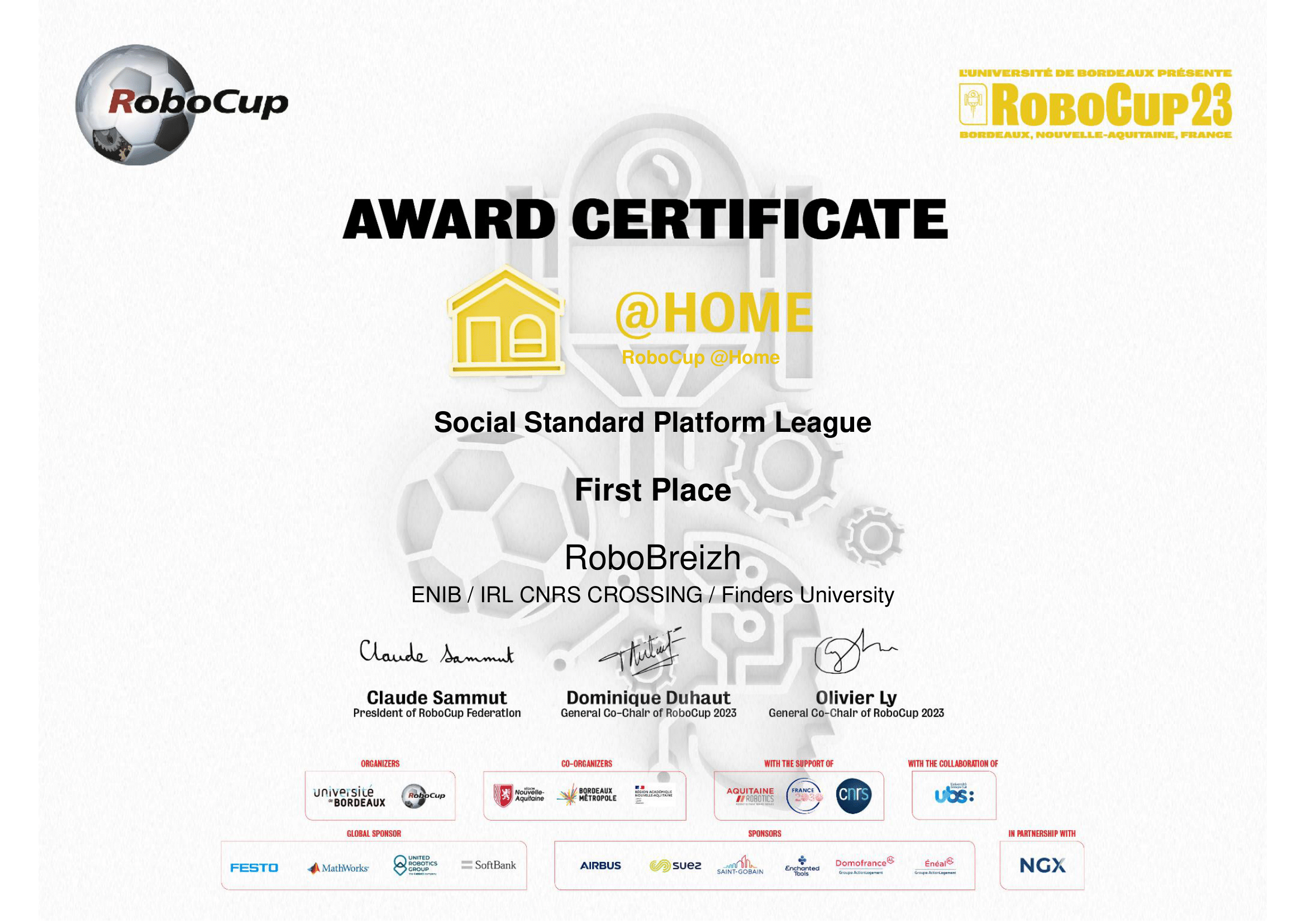

2023

RoboCup@Home 2023 : 1st

Social Standard Platform League (SSPL)

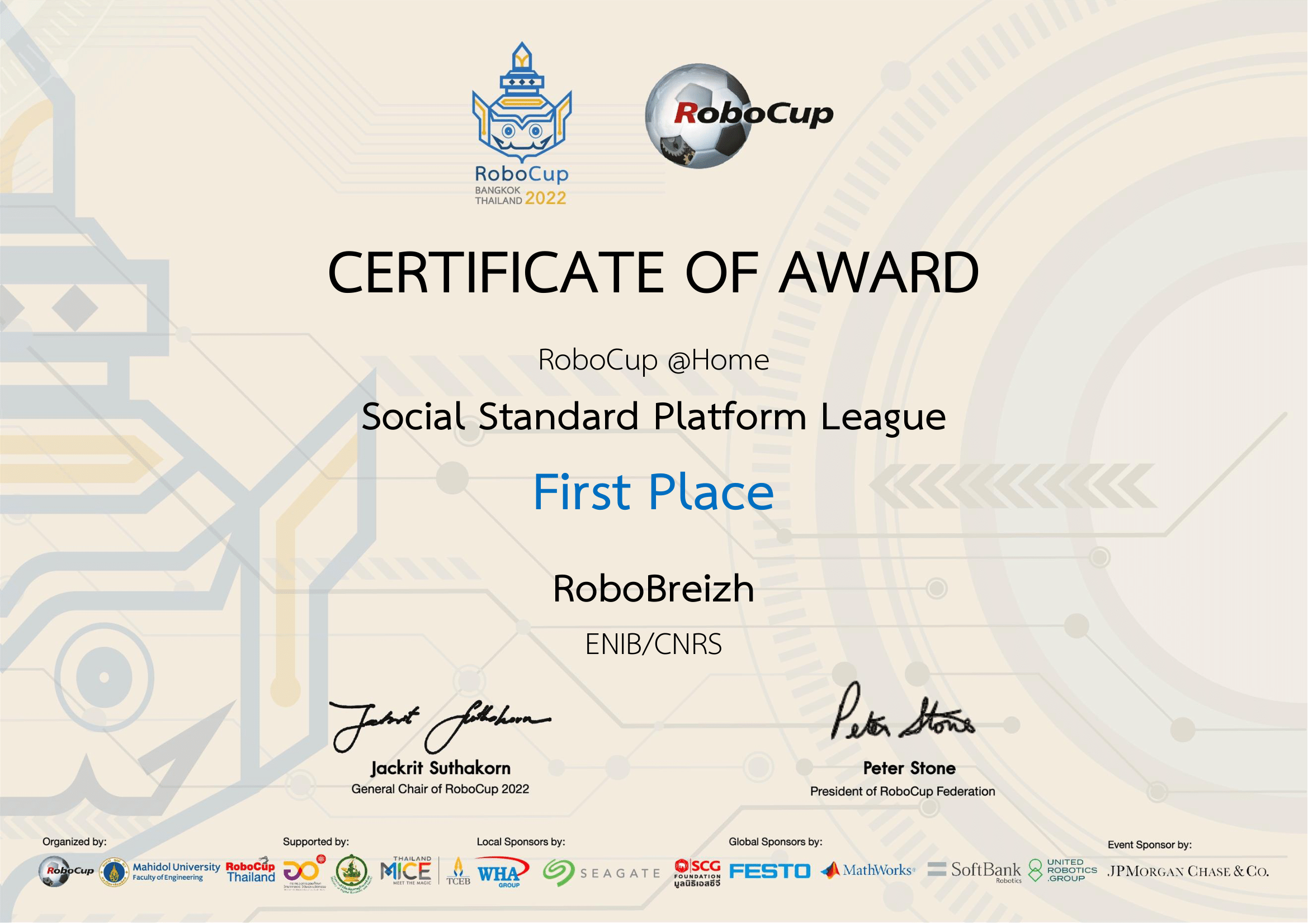

2022

RoboCup@Home 2022 : 1st

Social Standard Platform League (SSPL)

2021

RoboCup@Home 2021 Virtual Competition: 3rd

Social Standard Platform League (SSPL)

"The RoboCup@Home league aims to develop service and assistive robot technology with high relevance for future personal domestic applications. It is the largest international annual competition for autonomous service robots and is part of the RoboCup initiative."

RoboCup@Home league

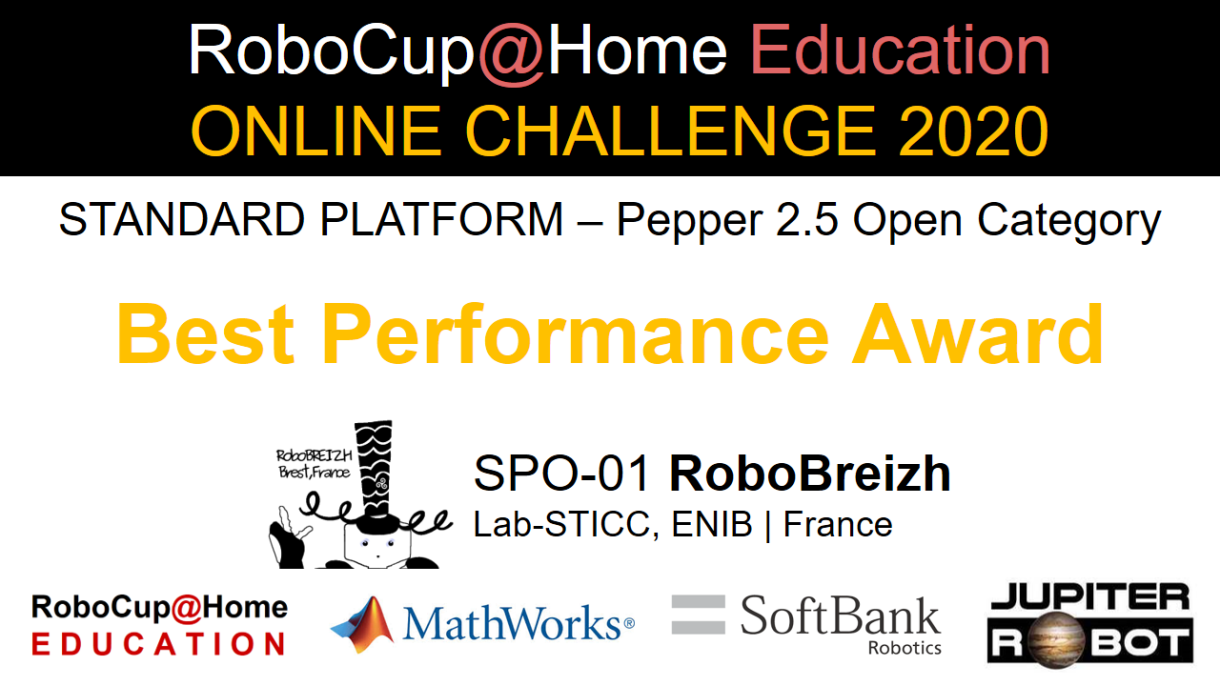

2020

RoboCup@Home Education Online Challenge: 1st

Social Standard Platform League (SSPL)

RoboCup@Home Education is an educational initiative in RoboCup@Home that promotes educational efforts to boost RoboCup@Home participation and artificial intelligence (AI)-focused service robot development.

Robocup@Home Education Online Challenge official website